High-Performance Computers Provide Speed to Solve Complex Power Industry Challenges

The Story in Brief

Researchers increasingly use high-performance computers to tackle the power industry’s diverse technical challenges, from grid operations to nuclear accident mitigation. Complex analyses that once required weeks or months now are possible in just minutes.

EPRI researcher Richard Wachowiak needed to evaluate multiple strategies and consider as many fixes as possible to minimize the impact of nuclear plant accidents. Examples of such fixes included adding water to the reactor pressure vessel and installing filters in the reactor’s drywell vent. Working alongside Phoebe, he did this with computer simulations for more than 500 different scenarios in just a few hours. Phoebe is EPRI’s high-performance computer, and before she joined the EPRI team Wachowiak would have spent nearly a month to run just 50 similar simulations.

If the electric power industry has contributed to the rise of high-performance computers by providing the energy to run them, these machines are now returning the favor, enabling new ways for researchers to examine and solve diverse technical challenges. With high-performance computing, scientists can run models and simulations that would otherwise be too expensive, complex, or dangerous. The key factor is speed, a direct result of parallel computing. By splitting tasks and running each part independently, parallel computing enables researchers to rapidly conduct thousands of simulations and run virtual experiments that would be impossible with regular computers. EPRI’s Phoebe can perform 8 trillion calculations per second—a thousand times faster than a typical personal or work computer.

Preparing for the Unlikely

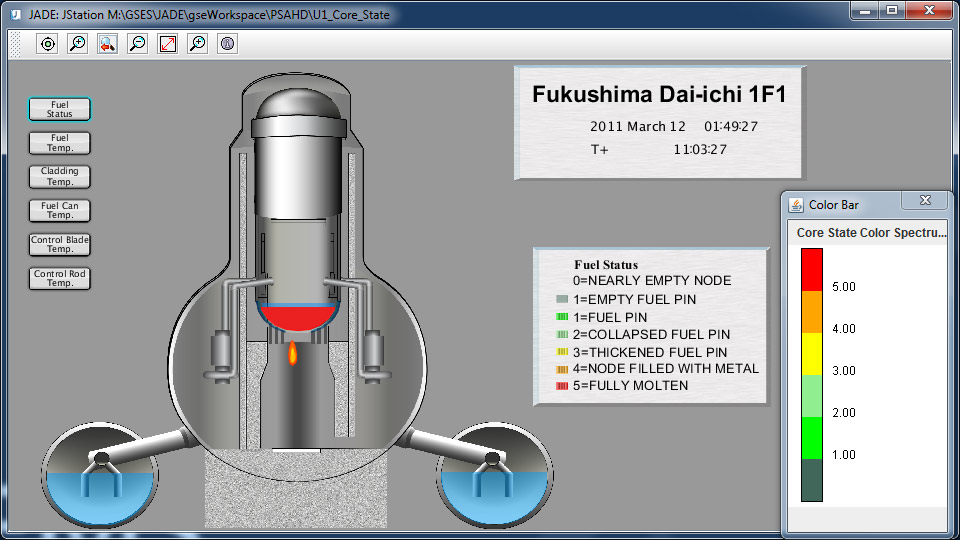

Although nearly all nuclear power plants have operated safely and reliably over their lifetimes, many people associate nuclear power with images of Three Mile Island, Chernobyl, and Fukushima Daiichi. For Wachowiak, principal technical leader in EPRI’s Risk and Safety Management Program, understanding how to limit the impact of such accidents inspires and drives his research.

Wachowiak works on modeling and simulation of severe accident mitigation strategies at nuclear plants. He looks for ways to mitigate accidents by evaluating many factors, such as the probability that a certain component fails during an earthquake.

In a recent study, Wachowiak and his team used Phoebe and EPRI’s Modular Accident Analysis Program software to run more than 120,000 simulations of nuclear accident scenarios. The simulations estimated each scenario’s likelihood by generating event tree diagrams that show what happens after an initiating event. The diagrams were modified to reflect what occurs with a mitigating strategy, such as injecting water to cool the reactor.

Wachowiak’s team investigated the ways each strategy could succeed and fail and calculated the potential effects of each scenario, such as the amount of radioactive material released. Then they used a code developed by the U.S. Nuclear Regulatory Commission (NRC) to model the accident’s public health impacts, given factors such as weather patterns, wind direction and speed, and the nearby population.

After running and analyzing thousands of simulations, researchers determined that adding water to a damaged reactor core was essential to any mitigation strategy. Without it, containment of radioactive material is unlikely, regardless of any other steps taken.

With the results of these simulations, nuclear plant operators can more effectively identify necessary improvements and mitigation strategies at their facilities. “We work to help utilities make informed decisions about where they should invest their resources to mitigate these scenarios,” said Wachowiak.

“The strategies that we are researching go over and above what is already in a plant’s licensing basis,” he said. “This work will lead to a set of actions at nuclear plants that address a broader range of accident scenarios, increasing industry safety.”

Helping Grid Operators Respond Adeptly to Disturbances

What happens when lightning hits a transmission line or a truck crashes into a transmission tower? Such disturbances can have devastating effects on the grid in minutes or even seconds. Grid operators need huge computational power to quickly analyze grid conditions and inform timely responses. The objective is to assess transient stability, the grid’s ability to keep its equipment operational after disturbances. Unfortunately, performing hundreds of simulations in a matter of minutes is beyond the capabilities of most utility computer systems.

Enter high-performance computing. For two years, EPRI has worked with researchers at Lawrence Livermore National Laboratory to modify and upgrade the Extended Transient Midterm Simulation Program, a transient stability analysis tool that EPRI developed in the 1980s. Back then, when researchers relied on a single computer processor, simultaneously evaluating several grid scenarios required significant time.

This time, the work was done on Livermore’s Cab, a 431-teraflop supercomputer capable of large parallel simulations of contingencies, or failures of various parts of the grid. Researchers ran simulations of thousands of contingencies in parallel, beginning with one or two triggering events, then simulating possible outcomes.

“We’re building a program that grid operators can run every few minutes to process a large number of contingencies so that they can have a nearly real-time picture of the grid’s security condition,” said EPRI’s Alberto Del Rosso, who led the project. “This leads to good, timely decisions on control actions to prevent adverse effects of grid disturbances.”

The research demonstrated the advantage of parallel computing. One study ran 4,096 contingencies on 4,096 processors in just 200 seconds. Such an operation on a non-parallel computer system would require about 20 hours.

EPRI’s upgraded transient stability analysis program operating on today’s high-performance computers can help utilities prevent and respond in real-time to crises that threaten power supply. For example, during transmission line faults, the software might inform a quick decision to redirect power flow or start a new generator. In the next two years, EPRI plans to assess the viability of running an upgraded version of the tool on Phoebe, which could provide further research enhancements in this critical area.

From Falcon to BISON: Fuel Performance Software and “Virtual Reactors”

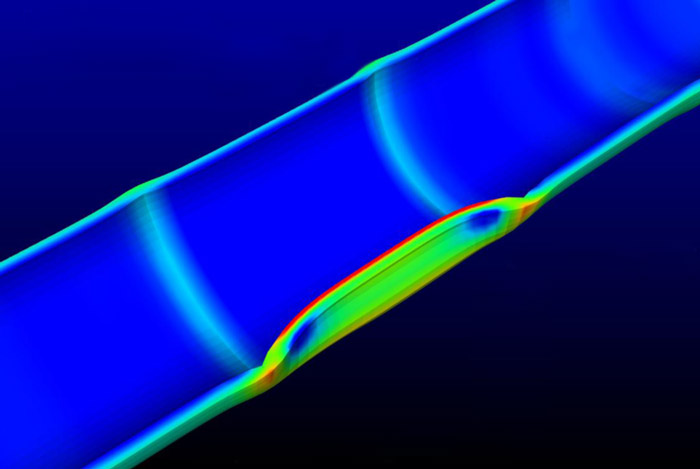

Most current software used by utilities to simulate the complex inner workings of nuclear reactor cores offer only a low-resolution, two-dimensional view of operations. Soon Phoebe will be joined by VERA (Virtual Environment for Reactor Applications). This large software suite will enable 3-D monitoring of any reactor core element at any time, with unprecedented clarity.

Under development at the Consortium for Advanced Simulation of Light Water Reactors, VERA will be used to simulate situations that would be impractical—and in some cases, catastrophic—to recreate in the real world. These include corrosion-related failures, coolant leaks, and fuel rod vibrations that can lead to dangerous fuel leaks. Running on a high-performance computer, VERA will complete complex simulations in a matter of hours that would require days or weeks with a single processor. VERA’s higher-resolution view could help plant operators to better anticipate and respond to concerns.

EPRI Project Engineer Brenden Mervin used Phoebe to test BISON-CASL, the fuel performance component of VERA’s virtual reactor. EPRI was well positioned for this task because it developed Falcon, a fuel performance analysis code used by the nuclear industry for the past four decades.

Mervin tested simple simulations, such as ramping up power of a shortened fuel rod, as well as more complex scenarios—for example, ramping up a full-length rod to full power, shutting it down, and then ramping it back up again. “Ramping back up is when you get the peak stress and when certain failures usually occur,” said Mervin. “We do these simulations to help predict and prevent fuel failures that could cost the utility a million dollars or more.”

EPRI found that BISON-CASL’s calculations for certain cladding stresses in virtual fuel rods were within 0.5% of Falcon’s numbers, affirming the new code’s reliability. “It wasn’t practical to compare BISON-CASL with real-world reactor data because those numbers are not readily available,” said Mervin. “Falcon has been compared and validated against real reactor data for many years, so it’s a good benchmark.”

With BISON-CASL’s 3-D capabilities, researchers will gain a better understanding of what happens to fuel pellets during an accident. Because pellets are not always symmetrical, two-dimensional modeling may overlook some critical features. The 3-D simulations will give researchers a clearer picture of pellet imperfections that can lead to greater stress during power ramps.

EPRI is also tasked with ensuring that VERA is a product that the nuclear industry can use. A development version of VERA will be available this year under a testing and evaluation license. “Will utilities need a supercomputer with 200,000 processors to run VERA?” said Mervin. “That would not be practical. But if they can run it with a small industry-class high-performance computer, we will have succeeded.”

Phoebe’s Future

EPRI is using high-performance computers for atmospheric modeling and to examine potential carbon capture technologies. Planned applications include the thermal-hydraulic response of containment systems in nuclear plants and software to characterize the degradation and performance of nuclear components.

For Mervin, access to Phoebe provides a huge step forward for simulation and modeling on large-scale projects. “In the past, you might want to run scenarios, but it would take two to four minutes a pop,” he said. “If you’ve got 264 scenarios, that’s something you’re not going to do. With Phoebe at your fingertips, 264 scenarios is not a problem. You just throw them all in the computer cluster, and the results come flowing out.”

Key EPRI Technical Experts:

Richard Wachowiak, Alberto Del Rosso, Brenden Mervin

If you would like to contact the technical staff for more information, send your inquiry to techexpert@eprijournal.com.